What is Kubernetes?

We are at an interesting phase in software development where the focus has clearly shifted now to how we can write distributed and scalable applications that can be deployed, run and monitored effectively.

Docker took the first steps towards addressing this piece of the puzzle by revolutionizing the toolset that made it easy for teams to ship, build and run software. The fact that we can now package all application dependencies in a single package, utilize common layers in the stack and truly run your application anywhere that Docker is supported has taken the pain out of distributing and running your applications in a reliable way.

Docker has enabled many efficiencies in software development that helps us eliminate the pain that was inherent while the application changed hands either across teams (dev → qa → operations) and also across staging environments (dev → test → stage → production).

But is Docker Enough?

If you look at current applications that have to be deployed to serve a large number of users, running your application across a container or two will not be enough. You will need to run a cluster of containers, spread out across regions and capable of doing load balancing. Those are just a few of the requirements. Other requirements expected from today’s large scale distributed deployments include:

- Managing Application Deployments across Containers

- Health Checks across Containers

- Monitoring these clusters of containers

- Ability to programmatically do all of the above via a toolset/API.

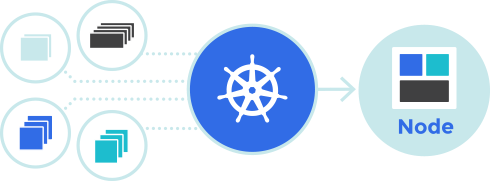

This is where Kubernetes comes in. As per its official page, Kubernetes is an open source system for automating deployment, scaling and management of containerized applications.

Kubernetes has been built on software that has been developed at Google and which has been running their workloads for more than a decade. This system at Google named Borg has been the precursor to Kubernetes today.

Since its announcement and release of its code as open-source, a couple of years ago, Kubernetes has been one of the most popular repositories on Github. The project has seen significant backing from multiple vendors and one of its most notable features is that it is able to run across multiple cloud providers and even on-premise infrastructure. It is not currently tied to any particular vendor.

Is Kubernetes The Only Container Cluster Management Software Out There?

Definitely not. Couple of other competing solutions are present : Docker Swarm and Apache Mesos. However, the current leader in terms of features and momentum is Kubernetes since Docker Swarm lacks features that make it a serious contender yet while Apache Mesos does come with its complexity.

Since Kubernetes comes with its own toolset, you can pretty much configure and run the software on multiple cloud providers, on-premise servers and even your local machine.

Google Cloud Platform also provides the Google Container Engine (GKE) that provides a Container Engine service built of top of Kubernetes. This makes Kubernetes cluster management a breeze. Kubernetes has definitely gained significant mindshare and is currently being promoted as the go to infrastructure software for running distributed container workloads.

It comes with its own learning curve since it introduces various concepts like Node, Pod, Replication Controller, Health Checks, Services, Labels and more. In a future blog post, we will break down these building blocks to understand how Kubernetes orchestrates the show.

Explore Related Offerings

Related Content

Get Started

View Previous Blog

View Previous Blog