Key features to look for in Container offerings on Public Cloud

1. Introduction

In the first part of this multi-part blog series, we looked at the Cloud Conundrum and why enterprises and ISVs would like to build Cloud Agnostic Architecture based Cloud Apps and hence the necessity Container Orchestration Platforms while building such Cloud apps. We also briefly touched upon various Container Orchestration Platforms that are available on Public as well as Private Cloud.

In this, the second part of the blog series, we will cover various containerization related offerings in a bit more detail. We will focus on Public Cloud Container offerings such as Container Registries, Container as a Service and Kubernetes as a Service from AWS, Azure and Google Cloud. Container Orchestration platforms support various features related to the development and deployment of cloud apps along with operational aspects of the platform itself. For the purpose of this blog, we have considered the following features that are most relevant and important to the over-all DevOps community,

Key features of Container Orchestration Platforms

Key features of Container Orchestration Platforms

As we continue this blog series we will touch upon additional selection mechanisms and some real-life examples of how enterprises and ISVs are choosing these platform.

There are other containerization platforms available such as Rancher, Platform9, Helios, and IBM Cloud Kubernetes Service, etc. But for the purpose of this blog, we are limiting to above-mentioned platforms, based on popularity, relevance, and maturity.

2. Public Cloud Infrastructure

There are three main container orchestration offerings from popular Public Cloud Providers,

- Container Registry

- Container as a Service

- Kubernetes as a Service

2.1. Container Registry

Container Registry is a mechanism for storage and retrieval of Docker container images. AWS, Azure as well as Google Cloud Platform offer this service to build and manage your own private container repositories.

Key features of Container Registries

Key features of Container Registries

2.1.1. Management

Ease of management and integration with Cloud services

All the public clouds offer their own version of Docker Private Container Registries. All of the registries support standard features such as creating, storing Docker container images along with version control through tagging. Google also provides a standalone tool called as Google Container Builder, that can import source code from leading source code repositories such as GitHub, Bitbucket, and Cloud Source Repositories, execute a build and produce artifacts such as container images.

The registries are well integrated with most other Docker container-related services as well as Kubernetes related services on the Public Cloud. For example, Amazon’s Elastic Container Registry (ECR) is well integrated with Amazon’s Elastic Container Service (ECS) and Elastic Kubernetes Service (EKS). Azure and Google Cloud provide similar integration and capabilities.

Support for Container Images formats

Amazon, Azure, and Google all of them support Docker V2 as well as Open Container Initiative (OCI) image formats. Docker Registry HTTP API V2 Rest Endpoint is also supported by all the cloud platforms.

Lifecycle management for container images

To manage multiple versions and tags for your images, Amazon provides such support where you can define life cycle policies through which you can perform automated clean-up of your images.

Platform security

All the Cloud Registries are by default private and secure. You can maintain complete control over who can view, access and download an image. Amazon and Azure by default encrypt all the stored images. Google also supports encryption of images through customer-managed keys.

2.1.2. Execution

Registry data replication and high availability

Yes, all Public Clouds provide geo-replication and high availability. Amazon uses AWS-Simple Storage Service (S3) to store your images and hence are by default replicated and highly available. In the case of Azure, it lets you control the geo-replication at an additional cost. Google also makes sure that your container images are replicated globally and are highly available.

Public access for Container Registry

Google provides such support where you can make your container images publicly accessible by making underlying storage public. So Google is the best choice for this, whereas Amazon and Azure you can make your registry accessible by means of authentication roles and policies.

Support for mirroring Docker Hub

Google provides such a feature by creating a Docker Hub mirror. With the mirror, Google maintains a copy of frequently accessed Docker Hub images. Google also makes sure that the locally stored image is in-sync with Docker Hub.

Vulnerability scanning for images in the Registry

Google supports vulnerabilities scanning for the images stored in the registry and makes sure that your container images are safe to deploy. It also automatically locks down risky images from being deployed to Google Kubernetes Engine.

2.2. Containers as Service Platforms

Before adopting Kubernetes in the Cloud ecosystem, most of the Cloud providers rolled out some form of Container Orchestration Service. This section covers Container Service platforms offered by Amazon, Azure, and Google.

2.2.1. Management

Ease of set-up, administration, and integration with Cloud Services

All the clouds providers support some form of set-up and administration. In comparison with Azure Container Instances and Container deployment with Google Compute Engine, Amazon ECS provides by far the most mature and complete Container Service Platform.

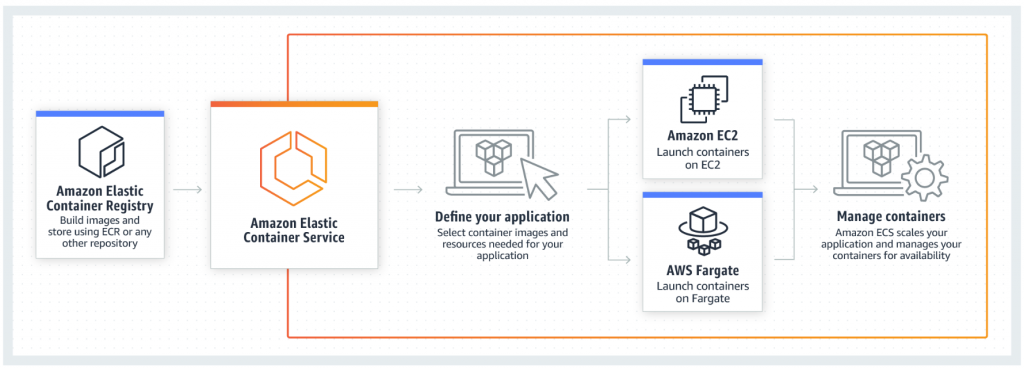

Source: Amazon.com

Amazon ECS can be the right choice to deploy single or multi-container applications. It provides complete management surrounding the cluster and Docker containers, making container deployment very simple. Azure Container Instances and Containers on Google Compute may not be the right choice for any complex multi-container deployments.

On respective clouds, the container platforms are integrated with other services such as Container Registry, Access Control, and Management, Networking, Load Balancer, etc.

Underlying VM infrastructure management

On Amazon ECS, the answer is ‘yes’ and ‘no’, as it provides two flavors for launching your containers. In option one, you have to manage your own EC2 VMs while the second option named as Fargate provides a complete serverless deployment.

On Azure platform, for Azure Container Instances, you don’t have to manage your own VMs, but on Google Cloud, you will have to manage your own underlying VMs to run container workloads.

Platform security

Pretty much all the platform features are secured through the role and policy-based access control. At the same time, you can choose to launch the underlying VMs and clusters in Virtual Private Cloud or Networks and provide necessary isolation for your workloads.

2.2.2. Execution

Deploying containerized workloads

All the platforms support CLI as well as Console based deployments for containerized workloads. Amazon ECS supports a CLI which provides Docker Compose like mechanism to develop and deploy multi-container applications.

Support for quick lift and shift based container workload deployment

If you want to quickly launch a container you can look at Azure Container Instances without having to create any VMs or adopt any higher-level services. You can also create containers on Google Compute Engine VMs that can use the normal OS or Container Optimized OS instance for a lift and shift approach.

Support for Container Orchestration features such as auto-scaling, auto-healing

Amazon ECS provides complete support for container orchestration with features such as auto-scaling, auto-healing and also load balancing along with health-check for your containers.

Both Azure Container Instances and Container on Google Compute Engine provide some container orchestration features. Both expect you to use Kubernetes service for full container orchestration.

Platform Health Monitoring

Amazon ECS health monitoring is available through CloudWatch and CloudTrail, whereas on Azure you can use Azure Portal to monitor Azure Container Instances. For Google, you can use the Google Cloud Console to monitor activities and view logs for your container VM instances.

2.3. Kubernetes as a Service Platforms

Google is one of the early adopters of containerization technology and has perfected the container orchestration system and has been using it in a production environment for years before making it Open Source as “Kubernetes”. This Open Source technology is adopted by all major Public providers and is offered as managed Kubernetes as a Service Platform – Amazon Elastic Kubernetes Service (EKS), Azure Kubernetes Service (AKS) and Google Kubernetes Engine (GKE).

2.3.1. Management

Ease of set-up, administration, and integration with Cloud services

From management and ease of operations perspective, all the three Cloud Platforms are winners. All of them follow very similar Kubernetes cluster creation and set-up process as shown in Figure 3 below

Kubernetes cluster creation and set-up process

Kubernetes cluster creation and set-up process

Using the Cloud console, you create a Kubernetes Control Plane followed by the creation of additional Worker nodes. Kubernetes Cluster control plane is managed by Cloud platforms and is made highly available by distributing the nodes across availability zones.

Google provides more granular node placement support during the creation of control plane and worker nodes and you can choose a number of availability zones and regions to use while placing the cluster nodes.

After the cluster creation, you can perform cluster management operations using Kubernetes CLI and tools. Rest of the Kubernetes functionality remains the same as the underlying Open Source platform.

Kubernetes Cloud platform is integrated with other Cloud services such as Container Registry, Access Control, and Management, Networking, Load Balancer, etc.

Underlying VM infrastructure management

The answer is again “yes” and “no”. The Kubernetes control plane is completely managed by Cloud platforms but you need to create Worker nodes that are part of the Kubernetes cluster.

Platform security

Kubernetes Cloud platform is secured at various layers on Google, Amazon, and Azure. Google, Amazon as well as Azure provide integration with identity and access control mechanisms they have. Azure also supports integration with Azure Active Directory with Kubernetes RBAC based security. Kubernetes cluster components can be isolated by making use of network isolation through a virtual private cloud.

2.3.2. Execution

Support for Container Orchestration features such as auto-scaling, auto-healing

Auto-scaling and load-balancing is supported by the underlying Kubernetes platform. Horizontal Pod auto-scaling is supported across all the clouds. Google also supports Vertical Pod auto-scaling and you can configure for recommendations or let Google make automatic CPU and Memory changes.

For load balancing, all the Cloud platforms have integrated their managed Load Balancer Service with Kubernetes Ingress Controller.

Support for deployment mechanisms

Google GKE supports Kubernetes CLI as well as Helm based deployments. Google also provides a tool called ‘kompose’ that can be used to convert Docker Compose files to Kubernetes deployment. Amazon EKS also supports Kubernetes CLI as an option for deployment. Azure AKS supports Kubernetes CLI, Helm Charts and Draft based deployments.

Portability of workloads to other Kubernetes environments

All the Kubernetes Cloud Service platforms are Cloud Native Computing Foundation (CNCF) certified for Kubernetes and hence are portable across all other Kubernetes environments.

Platform health monitoring

Google provides GKE monitoring support through Stackdriver logging and monitoring. On Azure, Kubernetes cluster gets monitored through Azure Monitor for Container service, whereas on Amazon, logging and API call monitoring for the EKS cluster gets handled through CloudWatch and CloudTrail respectively.

On top of above, monitoring of Kubernetes cluster can be handled through Prometheus as well as few other third-party tools such as AppDynamics, Datadog, etc.

How will I be charged?

All the Cloud providers charge for the compute, storage and network used for worker nodes in the Kubernetes cluster. Amazon charges $0.02 per hour for each Kubernetes cluster you create. This includes cost for control plane and master management. Google and AKS do not charge for Kubernetes master and control plane management for free.

3. Container Orchestration Platforms on Private Cloud

Read the next part of this blog series where we cover the various Container Orchestration Platforms available on Private Cloud, key features that can help you identify the right platform for your particular use case.

If you’d like to explore a bit more, check out the Product Modernization Hub and how your business can leverage the cloud to catalyze growth with Xoriant’s cloud enablement offerings.

You may also reach out to us at PE@Xoriant.com and our experts will schedule a FREE product assessment session to provide you with a custom approach as per your needs.

References

View Previous Blog

View Previous Blog